Apache Kafka Raft 是一种共识协议,它的引入是为了消除 Kafka 对 ZooKeeper 的元数据管理的依赖,被社区称之为 Kafka Raft metadata mode,简称 KRaft 模式。本文介绍了KRaft模式及三节点的 KRaft 集群搭建。

1 KRaft介绍

KRaft 简介

KRaft 运行模式的 Kafka 集群,不会将元数据存储在 Apache ZooKeeper中。即部署新集群的时候,无需部署 ZooKeeper 集群,因为 Kafka 将元数据存储在 controller 节点的 KRaft Quorum中。KRaft 可以带来很多好处,比如可以支持更多的分区,更快速的切换 controller ,也可以避免 controller 缓存的元数据和Zookeeper存储的数据不一致带来的一系列问题。

在3.0版本中可以体验 KRaft 集群模式的运行效果(请注意目前还不成熟,官方不建议生产使用)。

KRaft 架构

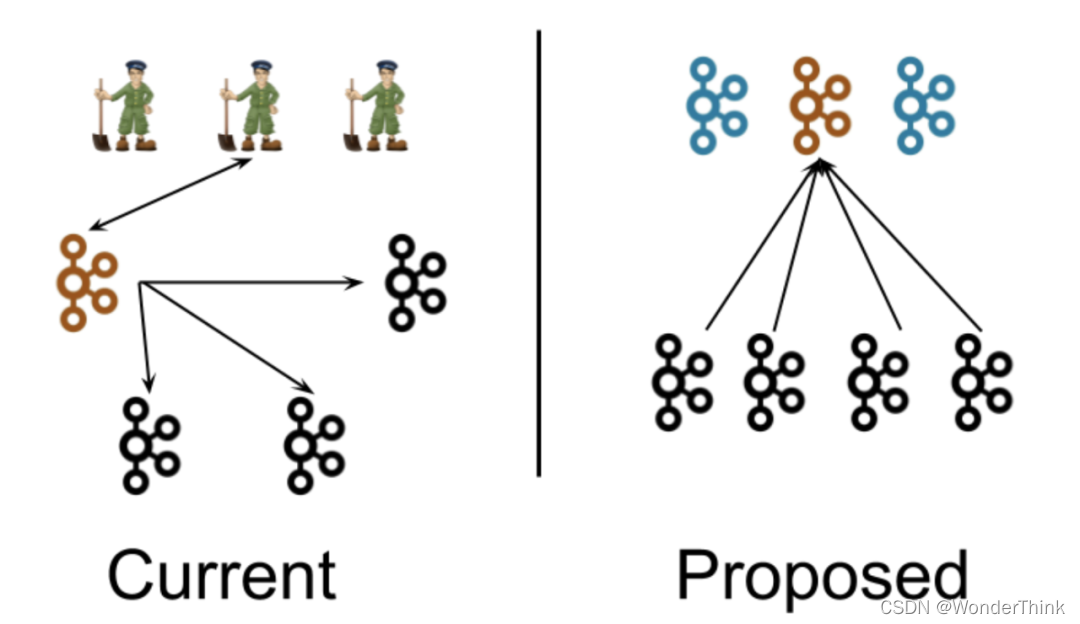

首先来看一下 KRaft 在系统架构层面和之前的版本有什么区别。KRaft 模式提出了去 Zookeeper后的 Kafka 整体架构如下,下图是前后的架构图对比:

在当前架构中,Kafka集群包含多个broker节点和一个ZooKeeper 集群。我们在这张图中描绘了一个典型的集群结构:4个broker节点和3个ZooKeeper节点。Kafka 集群的controller (橙色)在被选中后,会从 ZooKeeper 中加载它的状态。controller 指向其他 broker节点的箭头表示 controller 在通知其他 broker 发生了变更。

在新的架构中,三个 controller 节点替代三个ZooKeeper节点。控制器节点和 broker 节点运行在不同的进程中。controller 节点中会选择一个成为Leader(橙色)。新的架构中,控制器不会向 broker 推送更新,而是 broker 从这个 controller Leader 拉取元数据的更新信息。

需要特别注意的是,尽管 controller 进程在逻辑上与 broker 进程是分离的,但它们不需要在物理上分离。即在某些情况下,部分或所有 controller 进程和 broker 进程是可以是同一个进程,即一个broker节点即是broker也是controller。另外在同一个节点上可以运行两个进程,一个是controller进程,一个是broker进程,这相当于在较小的集群中,ZooKeeper进程可以像Kafka broker一样部署在相同的节点上。

Controller 服务器

在KRaft模式下,只有一小部分特别指定的服务器可以作为控制器,在Server.properties的Process.roles 参数里面配置。不像基于ZooKeeper的模式,任何服务器都可以成为控制器。这带来了一个非常优秀的好处,即如果我们认为 controller 节点的负载会比其他只当做broker节点高,那么配置为 controller 节点就可以使用高配的机器。这就解决了在1.0, 2.0架构中, controller 节点会比其他节点负载高,却无法控制哪些节点能成为 controller 节点的问题。

被选中的 controller 节点将参与元数据集群的选举。对于当前的 controller 节点,每个控制器服务器要么是Active的,要么是Standby的。

用户通常会选择3或5台(奇数台)服务器成为 controller 节点,3和5的个数问题和Raft的原理一样,少数服从多数。这取决于成本和系统在不影响可用性的情况下应该承受的并发故障数量等因素。

就像使用ZooKeeper一样,为了保持可用性,你必须让大部分 controller 保持active状态。如果你有3个控制器,你可以容忍1个故障;在5个控制器中,您可以容忍2个故障。

Process Roles

每个Kafka服务器现在都有一个新的配置项,叫做process.roles, 这个参数可以有以下值:

- 如果process.roles = broker, 服务器在KRaft模式中充当 broker。

- 如果process.roles = controller, 服务器在KRaft模式下充当 controller。

- 如果process.roles = broker,controller,服务器在KRaft模式中同时充当 broker 和controller。

- 如果process.roles 没有设置。那么集群就假定是运行在ZooKeeper模式下。

对于简单的场景,组合节点更容易运行和部署,可以避免多进程运行时,JVM带来的相关的固定内存开销。关键的缺点是,控制器将较少地与系统的其余部分隔离。例如,如果代理上的活动导致内存不足,则服务器的控制器部分不会与该OOM条件隔离。

Quorum Voters

系统中的所有节点都必须设置 controller.quorum.voters 配置。这个配置标识有哪些节点是 Quorum 的投票者节点。所有想成为控制器的节点都需要包含在这个配置里面。这类似于在使用ZooKeeper时,使用ZooKeeper.connect配置时必须包含所有的ZooKeeper服务器。

然而,与ZooKeeper配置不同的是,controller.quorum.voters 配置需要包含每个节点的id。格式为: id1@host1:port1,id2@host2:port2。

因此,如果你有10个broker和 3个控制器,分别命名为controller1、controller2、controller3,你可能在 controller1上有以下配置:

process.roles=controller

node.id=1

listeners=CONTROLLER://controller1.example.com:9093

controller.quorum.voters=1@controller1.example.com:9093,2@controller2.example.com:9093,3@controller3.example.com:9093

- 1

- 2

- 3

- 4

每个broker和每个controller 都必须设置 controller.quorum.voters。需要注意的是,controller.quorum.voters 配置中提供的节点ID必须与提供给服务器的节点ID匹配。

比如在controller1上,node.id必须设置为1,以此类推。注意,控制器id不强制要求你从0或1开始。客户端不需要配置controller.quorum.voters,只有服务端需要配置。

2 KRaft 三节点集群搭建

2.1 环境准备

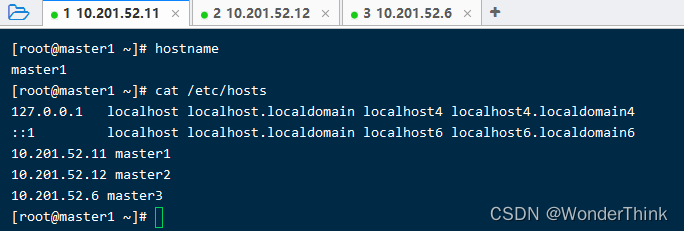

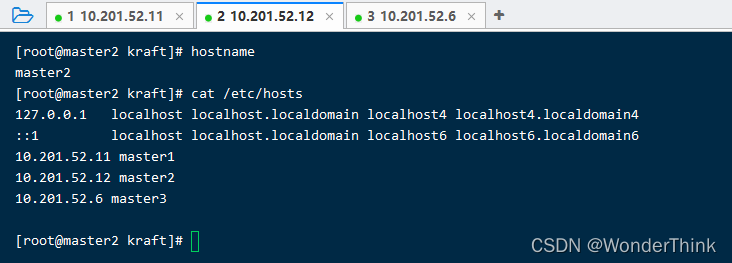

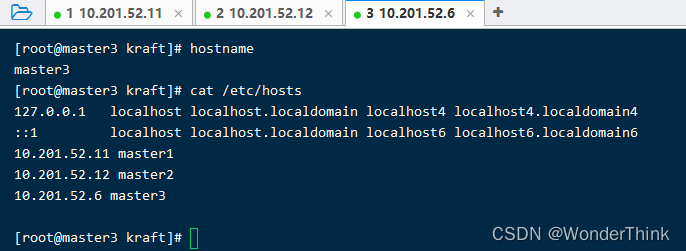

准备三台机器:

| hostname | ip | node.id |

|---|---|---|

| master1 | 10.201.52.11 | 1 |

| master2 | 10.201.52.12 | 2 |

| master3 | 10.201.52.6 | 3 |

修改三台机器的hostname

# 机器10.201.52.11上执行

$ hostnamectl set-hostname master1

机器10.201.52.12上执行

$ hostnamectl set-hostname master2

机器10.201.52.6上执行

$ hostnamectl set-hostname master3

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

在三台文件的/etc/hosts文件中追加所有的ip及其hostname

关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

- 1

- 2

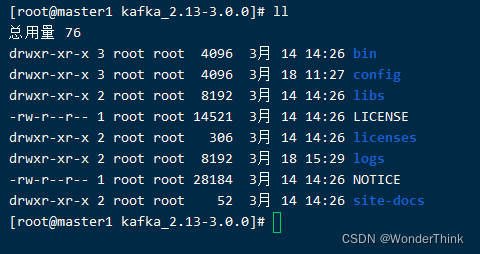

下载 kafka 并解压

kafka下载地址 https://kafka.apache.org/downloads

kafka版本号:3.0

tar -zxvf kafka_2.13-3.0.0.tgz

- 1

2.2 KRaft 配置及启动

配置 server.properties

master1节点的./config/kraft/server.properties配置

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

This configuration file is intended for use in KRaft mode, where

Apache ZooKeeper is not present. See config/kraft/README.md for details.

############################# Server Basics #############################

The role of this server. Setting this puts us in KRaft mode

process.roles=broker,controller

The node id associated with this instance's roles

node.id=1

The connect string for the controller quorum

controller.quorum.voters=1@master1:19091,2@master2:19091,3@master3:19091

############################# Socket Server Settings #############################

The address the socket server listens on. It will get the value returned from

java.net.InetAddress.getCanonicalHostName() if not configured.

FORMAT:

listeners = listener_name://host_name:port

EXAMPLE:

listeners = PLAINTEXT://your.host.name:9092

listeners=PLAINTEXT://:9092,CONTROLLER://:19091

inter.broker.listener.name=PLAINTEXT

Hostname and port the broker will advertise to producers and consumers. If not set,

it uses the value for "listeners" if configured. Otherwise, it will use the value

returned from java.net.InetAddress.getCanonicalHostName().

advertised.listeners=PLAINTEXT://:9092

Listener, host name, and port for the controller to advertise to the brokers. If

this server is a controller, this listener must be configured.

controller.listener.names=CONTROLLER

Maps listener names to security protocols, the default is for them to be the same. See the config documentation for more details

listener.security.protocol.map=CONTROLLER:PLAINTEXT,PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL

The number of threads that the server uses for receiving requests from the network and sending responses to the network

num.network.threads=3

The number of threads that the server uses for processing requests, which may include disk I/O

num.io.threads=8

The send buffer (SO_SNDBUF) used by the socket server

socket.send.buffer.bytes=102400

The receive buffer (SO_RCVBUF) used by the socket server

socket.receive.buffer.bytes=102400

The maximum size of a request that the socket server will accept (protection against OOM)

socket.request.max.bytes=104857600

############################# Log Basics #############################

A comma separated list of directories under which to store log files

log.dirs=/tmp/kraft-combined-logs

The default number of log partitions per topic. More partitions allow greater

parallelism for consumption, but this will also result in more files across

the brokers.

num.partitions=1

The number of threads per data directory to be used for log recovery at startup and flushing at shutdown.

This value is recommended to be increased for installations with data dirs located in RAID array.

num.recovery.threads.per.data.dir=1

############################# Internal Topic Settings #############################

The replication factor for the group metadata internal topics "__consumer_offsets" and "__transaction_state"

For anything other than development testing, a value greater than 1 is recommended to ensure availability such as 3.

offsets.topic.replication.factor=3

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

############################# Log Flush Policy #############################

Messages are immediately written to the filesystem but by default we only fsync() to sync

the OS cache lazily. The following configurations control the flush of data to disk.

There are a few important trade-offs here:

1. Durability: Unflushed data may be lost if you are not using replication.

2. Latency: Very large flush intervals may lead to latency spikes when the flush does occur as there will be a lot of data to flush.

3. Throughput: The flush is generally the most expensive operation, and a small flush interval may lead to excessive seeks.

The settings below allow one to configure the flush policy to flush data after a period of time or

every N messages (or both). This can be done globally and overridden on a per-topic basis.

The number of messages to accept before forcing a flush of data to disk

log.flush.interval.messages=10000

The maximum amount of time a message can sit in a log before we force a flush

log.flush.interval.ms=1000

############################# Log Retention Policy #############################

The following configurations control the disposal of log segments. The policy can

be set to delete segments after a period of time, or after a given size has accumulated.

A segment will be deleted whenever either of these criteria are met. Deletion always happens

from the end of the log.

The minimum age of a log file to be eligible for deletion due to age

log.retention.hours=168

A size-based retention policy for logs. Segments are pruned from the log unless the remaining

segments drop below log.retention.bytes. Functions independently of log.retention.hours.

log.retention.bytes=1073741824

The maximum size of a log segment file. When this size is reached a new log segment will be created.

log.segment.bytes=1073741824

The interval at which log segments are checked to see if they can be deleted according

to the retention policies

log.retention.check.interval.ms=3000

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

- 128

master2、master3节点的/config/kraft/server.properties配置与master1的仅有node.id不同,其他都一致。

master1:

node.id=1

- 1

master2:

node.id=2

- 1

master3:

node.id=3

- 1

生成集群 ID

整个集群有一个唯一的ID标志,使用uuid。可使用官方提供的 kafka-storage 工具生成,亦可以自己去用其他生成uuid。

$ ./bin/kafka-storage.sh random-uuid

xtzWWN4bTjitpL3kfd9s5g

- 1

- 2

格式化存储目录

使用上面生成集群 uuid, 在三个节点上都执行格式化存储目录命令:

$ ./bin/kafka-storage.sh format -t xtzWWN4bTjitpL3kfd9s5g -c ./config/kraft/server.properties

- 1

启功节点服务

最后,在已准备好在每个节点上启动 Kafka 服务器。

$ ./bin/kafka-server-start.sh ./config/kraft/server.properties

- 1

至此,三节点的Kafka KRaft集群已开启,接下来进行测试。

2.3 测试

可以连接到端口 9092(或您配置的任何端口)来执行管理操作或生产消费数据。

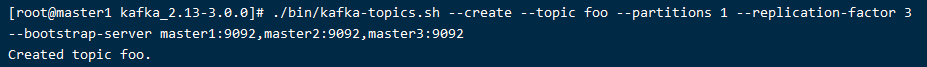

创建拥有3个副本的topic

$ ./bin/kafka-topics.sh --create --topic foo --partitions 1 --replication-factor 3 --bootstrap-server master1:9092,master2:9092,master3:9092

- 1

查看topic列表

$ ./bin/kafka-topics.sh --list --bootstrap-server master1:9092,master2:9092,master3:9092

- 1

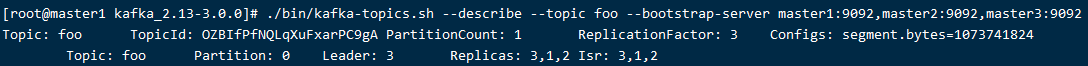

查看topic的详细信息

$ ./bin/kafka-topics.sh --describe --topic foo --bootstrap-server master1:9092,master2:9092,master3:9092

- 1

说明:

partiton: partion id,由于此处只有一个partition,因此partition id 为0

leader:当前负责读写的lead broker id

relicas:当前partition的所有replication broker list

isr:relicas的子集,只包含出于活动状态的broker

什么是ISR?

分区中的所有副本统称为AR(Assigned Replicas)。所有与leader副本保持一定程度同步的副本(包括leader)组成ISR(in-sync replicas)。而与leader副本同步滞后过多的副本(不包括leader),组成OSR(out-sync replicas),所以,AR = ISR + OSR。在正常情况下,所有的follower副本都应该与leader副本保持一定程度的同步,即AR = ISR,OSR集合为空。

开启消费者

$ ./bin/kafka-console-consumer.sh --bootstrap-server master1:9092,master2:9092,master3:9092 --topic foo

- 1

开启生产者

$ ./bin/kafka-console-producer.sh --broker-list master1:9092,master2:9092,master3:9092 --topic foo

- 1

删除topic

当该topic的所有生产者和消费者都关闭后,才可以删除topic。

$ ./bin/kafka-topics.sh --delete --topic foo3 --bootstrap-server master1:9092,master2:9092,master3:9092

- 1

拓展阅读: