https://developer.aliyun.com/article/1006775复制

server(组成集群,ec为12:4)

| ip | hosts | 硬盘 |

| storage01 | 172.16.50.1 | 12*10T |

| storage02 | 172.16.50.2 | 12*10T |

| storage03 | 172.16.50.3 | 12*10T |

| storage04 | 172.16.50.4 | 12*10T |

client

| ip | host |

| headnode | 172.16.50.5 |

| node02 | 172.16.50.6 |

| node03 | 172.16.50.7 |

| node04 | 172.16.50.8 |

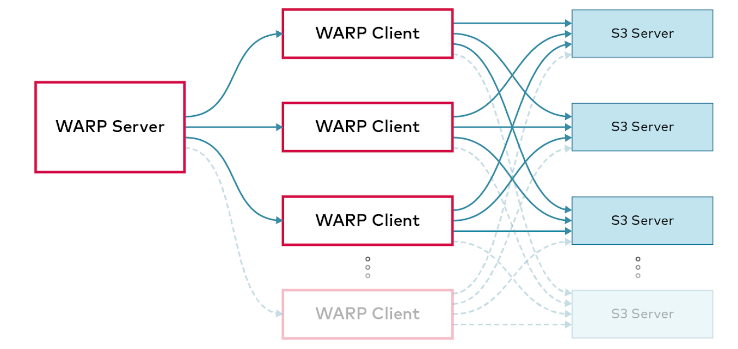

speedtest是一个易用的测试工具,它会先运行PUTS,然后运行GETS,通过增量的方式测试得到最大吞吐量。而warp则是一个完整的工具链,提供了很独立的测试项,能够测试GET;PUT;DELETE等都可以测试得到。同时通过cs的结构设计,更符合真实的使用场景,得到最贴近应用的性能结果,有利于性能分析。

warp结构如下图所示

server(组成集群,ec为12:4)

| ip | hosts | 硬盘 |

| storage01 | 172.16.50.1 | 12*10T |

| storage02 | 172.16.50.2 | 12*10T |

| storage03 | 172.16.50.3 | 12*10T |

| storage04 | 172.16.50.4 | 12*10T |

client

| ip | host |

| headnode | 172.16.50.5 |

| node02 | 172.16.50.6 |

| node03 | 172.16.50.7 |

| node04 | 172.16.50.8 |

speedtest是一个易用的测试工具,它会先运行PUTS,然后运行GETS,通过增量的方式测试得到最大吞吐量。而warp则是一个完整的工具链,提供了很独立的测试项,能够测试GET;PUT;DELETE等都可以测试得到。同时通过cs的结构设计,更符合真实的使用场景,得到最贴近应用的性能结果,有利于性能分析。

warp结构如下图所示

wget https://dl.min.io/client/mc/release/linux-amd64/mc chmod +x mc mv mc /usr/local/bin/复制

/usr/local/bin/mc alias set minio http://172.16.50.1:9000 <YOUR-ACCESS-KEY> <YOUR-SECRET-KEY>复制

/usr/local/bin/mc support perf minio/<bucket>复制

这里可以得到一个网络、硬盘和吞吐量的结果

NODE RX TX

http://storage01:9000 1.8 GiB/s 2.0 GiB/s

http://storage02:9000 1.8 GiB/s 2.0 GiB/s

http://storage03:9000 2.7 GiB/s 2.5 GiB/s

http://storage04:9000 2.4 GiB/s 2.2 GiB/s

NetPerf: ✔

NODE PATH READ WRITE

http://storage02:9000 /data1 192 MiB/s 235 MiB/s

http://storage02:9000 /data2 219 MiB/s 240 MiB/s

http://storage02:9000 /data3 186 MiB/s 262 MiB/s

http://storage02:9000 /data4 191 MiB/s 230 MiB/s

http://storage02:9000 /data5 179 MiB/s 221 MiB/s

http://storage02:9000 /data6 170 MiB/s 222 MiB/s

http://storage02:9000 /data7 200 MiB/s 219 MiB/s

http://storage02:9000 /data8 198 MiB/s 230 MiB/s

http://storage02:9000 /data9 166 MiB/s 207 MiB/s

http://storage02:9000 /data10 206 MiB/s 209 MiB/s

http://storage02:9000 /data11 198 MiB/s 213 MiB/s

http://storage02:9000 /data12 164 MiB/s 205 MiB/s

http://storage04:9000 /data1 172 MiB/s 259 MiB/s

http://storage04:9000 /data2 201 MiB/s 250 MiB/s

http://storage04:9000 /data3 218 MiB/s 256 MiB/s

http://storage04:9000 /data4 188 MiB/s 245 MiB/s

http://storage04:9000 /data5 157 MiB/s 197 MiB/s

http://storage04:9000 /data6 155 MiB/s 205 MiB/s

http://storage04:9000 /data7 154 MiB/s 210 MiB/s

http://storage04:9000 /data8 143 MiB/s 185 MiB/s

http://storage04:9000 /data9 181 MiB/s 207 MiB/s

http://storage04:9000 /data10 174 MiB/s 214 MiB/s

http://storage04:9000 /data11 173 MiB/s 218 MiB/s

http://storage04:9000 /data12 178 MiB/s 206 MiB/s

http://storage03:9000 /data1 194 MiB/s 337 MiB/s

http://storage03:9000 /data2 204 MiB/s 267 MiB/s

http://storage03:9000 /data3 212 MiB/s 261 MiB/s

http://storage03:9000 /data4 200 MiB/s 235 MiB/s

http://storage03:9000 /data5 168 MiB/s 216 MiB/s

http://storage03:9000 /data6 209 MiB/s 221 MiB/s

http://storage03:9000 /data7 179 MiB/s 222 MiB/s

http://storage03:9000 /data8 170 MiB/s 220 MiB/s

http://storage03:9000 /data9 144 MiB/s 186 MiB/s

http://storage03:9000 /data10 142 MiB/s 172 MiB/s

http://storage03:9000 /data11 149 MiB/s 171 MiB/s

http://storage03:9000 /data12 132 MiB/s 206 MiB/s

http://storage01:9000 /data1 99 MiB/s 119 MiB/s

http://storage01:9000 /data2 103 MiB/s 114 MiB/s

http://storage01:9000 /data3 104 MiB/s 114 MiB/s

http://storage01:9000 /data4